Overview

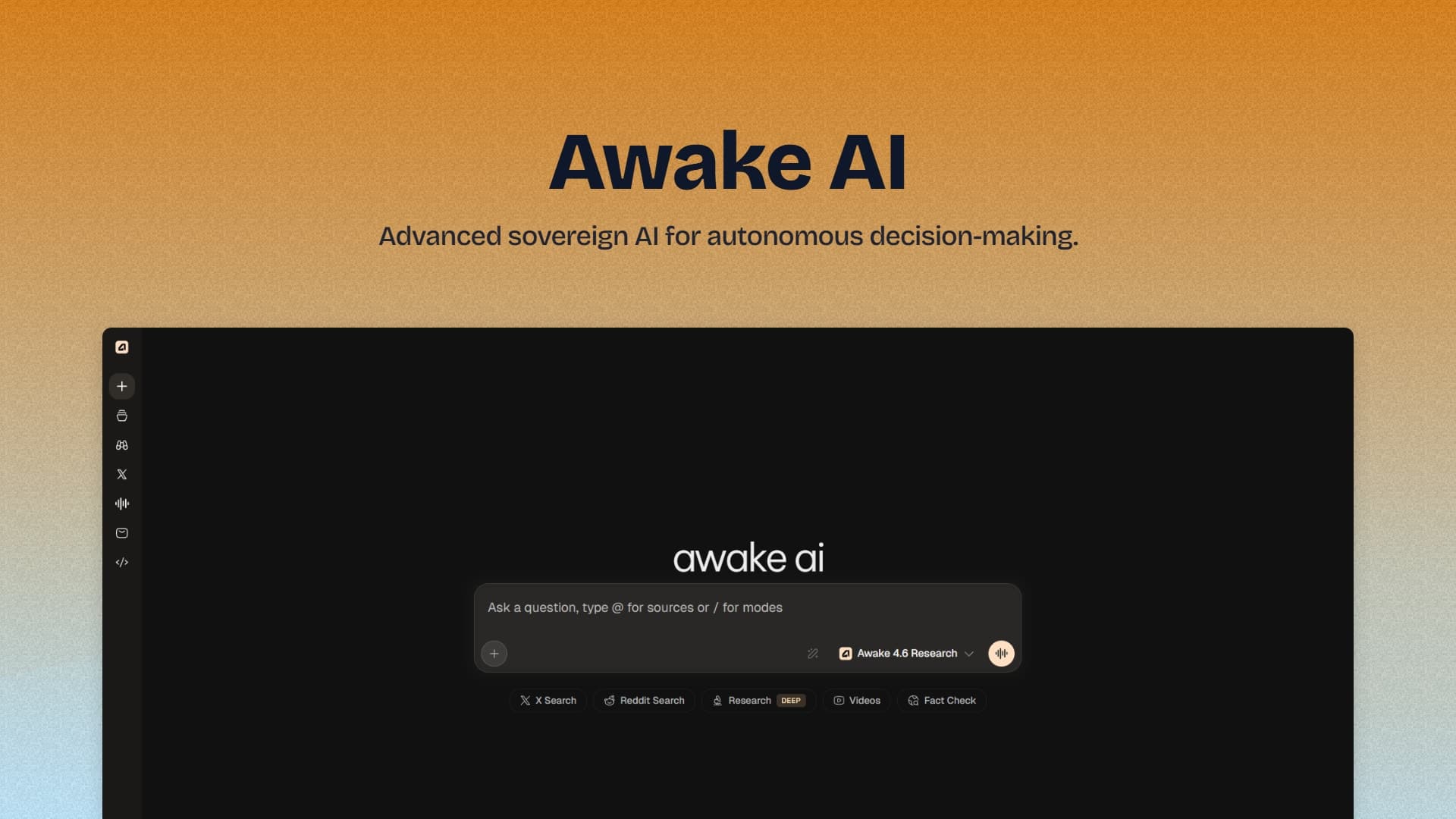

Modern AI systems often emphasize raw capability while lacking transparency, adaptability, and control. Awake AI was developed to address this gap by delivering a sovereign, scalable conversational AI model designed for high performance, contextual intelligence, and system-level flexibility.

Rather than functioning as a simple application layer, Awake AI is engineered as an intelligent model-driven system capable of real-time reasoning, contextual understanding, and dynamic response generation.

The system is designed to provide low-latency, high-quality conversational outputs while maintaining an architecture that can evolve alongside advancements in large language models and AI infrastructure.

Problem Statement

Despite rapid progress in conversational AI, most implementations exhibit critical limitations at both the model and system levels. These systems often suffer from high response latency under real-world usage conditions, making interactions feel slow and disconnected.

Many architectures are rigid and tightly coupled, which restricts adaptability and makes future enhancements difficult to implement. In addition, there is often limited support for extending or integrating new model capabilities, resulting in systems that become outdated quickly. Another major challenge lies in weak contextual memory, which leads to inconsistent conversational coherence and reduced user trust.

The primary objective was to design a system that behaves like a production-grade AI model, capable of delivering consistent and context-aware responses while maintaining real-time inference performance. At the same time, the system needed to be scalable, modular, and maintainable over the long term without introducing unnecessary complexity.

System Architecture Strategy

Modular Model-Oriented Backend

The system was architected using a modular backend approach powered by Node.js, structured around model orchestration and inference pipelines rather than traditional request-response handling. This architectural decision enables independent scaling of inference and processing components while allowing flexible integration of multiple AI models and services. It also ensures a clear separation between model logic, context handling, and API layers, ultimately improving system reliability through better fault isolation and maintainability.

Real-Time Inference Pipeline

To achieve responsiveness comparable to advanced AI systems, Awake AI implements an optimized real-time inference pipeline designed for minimal latency and maximum throughput. This design allows the system to generate near-instant responses while supporting smooth multi-user interactions. It also ensures efficient handling of contextual data and input streams, reducing computational overhead during inference and maintaining consistent performance under load.

Scalable Interaction Layer

The interaction layer was built using Next.js to deliver a fast and responsive user experience that aligns with the capabilities of the underlying AI model. By leveraging modern rendering techniques, the system achieves rapid interface loading and smooth hydration, enabling fluid conversational updates in real time. This ensures seamless synchronization between user input and model-generated responses, creating a natural and engaging interaction experience.

Data & Context Management Layer

A structured data layer powered by PostgreSQL and RESTful APIs supports context persistence and state management, which are critical for maintaining conversational continuity. This layer enables reliable tracking of conversational context and efficient handling of session data, ensuring that interactions remain coherent over time. It also provides secure and scalable data communication, forming the foundation for advanced capabilities such as long-term memory and personalized AI experiences.

Core Capabilities

Awake AI is designed to deliver contextual reasoning by generating responses that are coherent, relevant, and aligned with the ongoing conversation. The system achieves real-time inference performance through optimized processing pipelines, enabling fast and natural interactions. Its modular architecture allows seamless integration of multiple AI models, ensuring adaptability as new technologies emerge. Additionally, the infrastructure is built with an API-first approach, making it highly scalable and suitable for deployment across diverse environments.

Key Learnings

The development of Awake AI highlighted that AI performance is not solely dependent on model capability but equally on system architecture and design decisions. Achieving real-time inference requires careful optimization of backend processes and data flow, while effective context management plays a crucial role in maintaining conversational intelligence. The project also reinforced the importance of modular architectures, which provide the flexibility needed to adapt and evolve AI systems over time without introducing unnecessary complexity.

Outcome

Awake AI represents a production-ready conversational AI model system built with a scalable and modular architecture tailored for modern AI deployment. The system delivers optimized real-time inference capabilities while providing a strong foundation for multi-model integration and advanced AI workflows.

Overall, the project demonstrates the ability to design and implement intelligent, high-performance AI systems that align with modern standards in AI engineering, software architecture, and DevSecOps practices.